[index]

Anton's Research Ramblings

31 May 2012

Phong Lighting in Shaders

Nothing too hard to begin with;

- I extended my .obj parser to export normals to the JSON, right after where I exported the array of texture

coordinates (once again remebering

to put a comma in-between).

- I created a new vertex buffer object, copied the data from the JSON file in, and

- Added a new per-vertex attribute in the same way that I added the attribute for texture coordinates

- I created a view matrix using lookAt from gl-Matrix.js and sent it in as a uniform variable

- I created a projection matrix using perspective from the same library

- create a light position and some colours

- did all the usual calculations for ambient diffuse and specular components

- I sent my per-attribute normals straight to the fragment shader to use as a colour to make sure they were okay

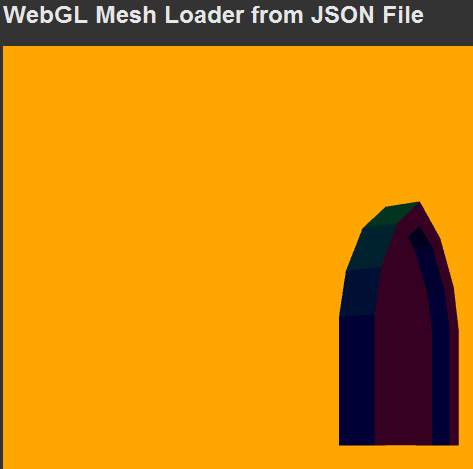

(see 1st picture, below)

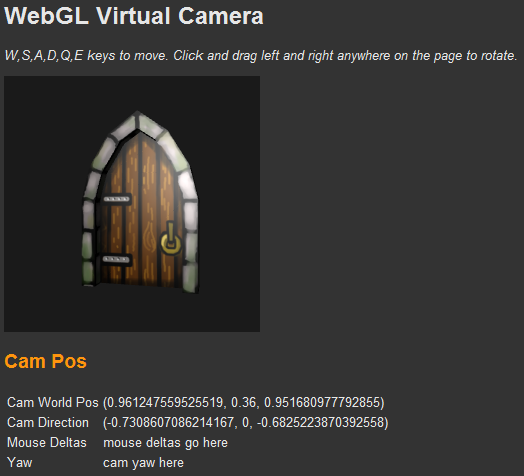

- and then something weird happened...(see 2nd picture).

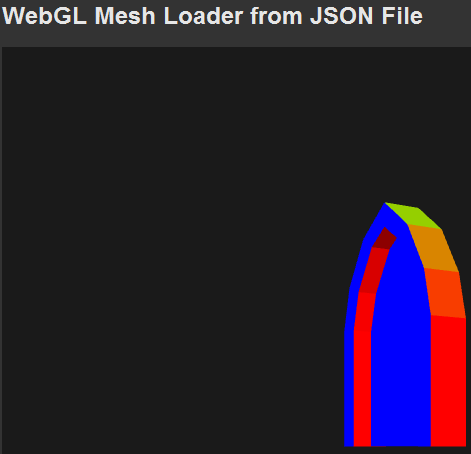

Using the local-space normals (the unconverted values from my JSON file) as colours. Seems to be fine! Red

= facing right, Green = Up, Blue = Forward

Using the local-space normals (the unconverted values from my JSON file) as colours. Seems to be fine! Red

= facing right, Green = Up, Blue = Forward

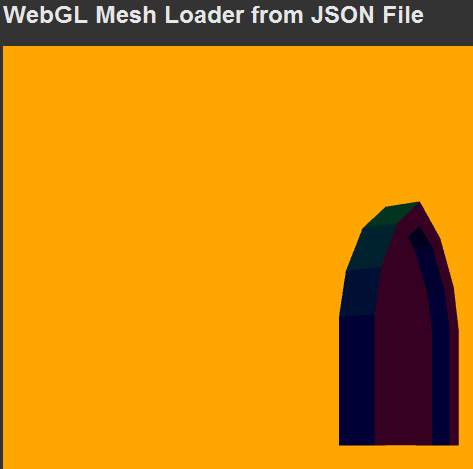

Testing the normals after converting them to eye-space. (Red=To the view-point's right, Green=Up,

blue=towards the view-point). But why does it look washed out? (Sorry ignore that the background colour is

different).

Testing the normals after converting them to eye-space. (Red=To the view-point's right, Green=Up,

blue=towards the view-point). But why does it look washed out? (Sorry ignore that the background colour is

different).

At first I thought that I must be scaling my normals, and forgetting to re-normalise them. Then nope - still

dark colours and grey-ish looking where it should be black.

I used the dot product result and also its negative as colours - seemed fine. Then it occured to me that my usual

lighting equation from OpenGL was doing something unexpected:

vec4 Id = Ld * Kd * dot (s, normal);

Where;

- Id is the RGBA intensity of diffuse light

- Ld is the RGBA colour of the diffuse light, and

- Kd is the RGBA coefficient of diffuse reflection for the surface (I used my texture map for this),

- dot (s, normal) gets the dot product (the angle between two vectors as a factor between 0 and 1) of

the surface normal, and the direction (s) from the surface to the

light source.

It works like this mathematically (component-wise multiplication):

Id.r = Ld.r * Kd.r * factor;

Id.g = Ld.g * Kd.g * factor;

Id.b = Ld.b * Kd.b * factor;

Id.a = Ld.a * Kd.a * factor;

Now, the alpha channel is also multiplied by the factor. Normally, this doesn't do anything in OpenGL unless you

explicitly enable a blend mode first. Then you can use it to

make your fragments transparent. I suspected something so I set Id.a = 1.0 after the lighting equation. And

it fixed the problem! It turns out that in WebGL, there is

a default blend mode enabled somewhere that was showing the background of the HTML page through the fragment -

making it look grey (my webpage was grey).

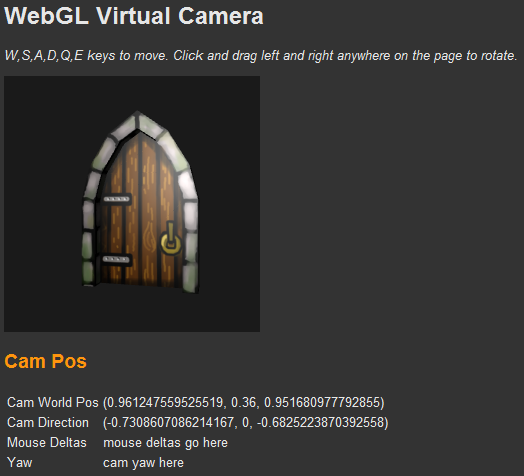

It worked out pretty nicely after figuring out the alpha-blend WebGL thing. Click image for

demo.

It worked out pretty nicely after figuring out the alpha-blend WebGL thing. Click image for

demo.

Virtual Camera with Controls

Getting started was pretty easy. You have two event systems that you can use. document.event and

canvas.event. The canvas system is exactly the same,

but only returns true for events if the mouse cursor is inside the canvas area. This would be handy for things like

mouse-picking or checking for change of user focus.

I found the focus a little problematic and ended up only allowing the mouse cursor to do things whilst a key was

held down. You only get absolute screen pixel positions as well,

so it limits your options for first-person cameras where you might want to keep turning around, even after when the

mouse cursor reaches the side of the window. If you can

design around these limitations then you are fine. The event system uses numbered codes to check for each keyboard

key. There is a list here:

http://stackoverflow.com/questions/1465374/javascript-event-keycode-constants.

You can use HTML5's built-in keyboard and mouse event system to easily grab user input for WebGL. Click to

run demo.

You can use HTML5's built-in keyboard and mouse event system to easily grab user input for WebGL. Click to

run demo.

I first went for the basics and wrote a Camera object where pressing W,A,S,D keys would move the camera along

-Z,-X,+Z, and +X axes, respectively. No sweat but the camera was

always facing forward. I used the mouse cursor to rotate a vector that I was using in the lookAt function.

I ran into a very strange problem then. Moving forwards and

backwards in the direction of the camera was fine, but left and right was not. I used the cross() function

from gl-matrix.js to define a sideways-pointing

vector, but the function was not returning the correct result when I laid it out like this: var sideVec =

vec3.cross (forwardVec, [0 1 0]);. I changed the parameters

around and set it up like: vec3.cross (forwardVec, [0, 1, 0], sideVec); and it was fine.

Next...

I dread implementing texture shadows in normal OpenGL. Do I dare? Probably not. I could move onto building a more

generic engine type of thing but I don't have any real project

specs yet. I guess I'm up to fluffing around with the experimental parts. I will need to do some animation software

eventually, so perhaps I should have a look at building a

hardware skinning demo using some sort of JSON script for animation.